Build High-Performance PC for AI Training & Generation Singapore

Custom AI workstation builds in Singapore — optimised for machine learning, LLM fine-tuning, Stable Diffusion, ComfyUI, and AI video generation. Expert component selection, assembly, OS and driver setup. Free consultation, 90-day warranty.

AI PC Build Services at BreakFixNow

Whether you’re training large language models, running Stable Diffusion locally, fine-tuning vision models, or building an AI video generation rig — BreakFixNow designs and assembles high-performance AI workstations tailored to your exact workload and budget. We handle everything from component selection and sourcing to assembly, BIOS tuning, OS installation, CUDA/ROCm driver setup, and benchmarking.

🖥️ AI PC Build Services

- Custom AI Workstation Build — Full build service from parts selection to final assembly. We spec your machine for your target workload: LLM training, image generation, video AI, or multi-task inference.

- GPU Selection & Installation — Expert advice on NVIDIA RTX, RTX PRO, and A-series GPUs for CUDA workloads, or AMD RX 7000-series for ROCm/PyTorch. Multi-GPU (NVLink/PCIe) configurations supported.

- CPU & Platform Selection — AMD Threadripper PRO, Intel Xeon W, or high-core-count consumer platforms (Ryzen 9, Core i9) matched to your I/O and memory bandwidth needs.

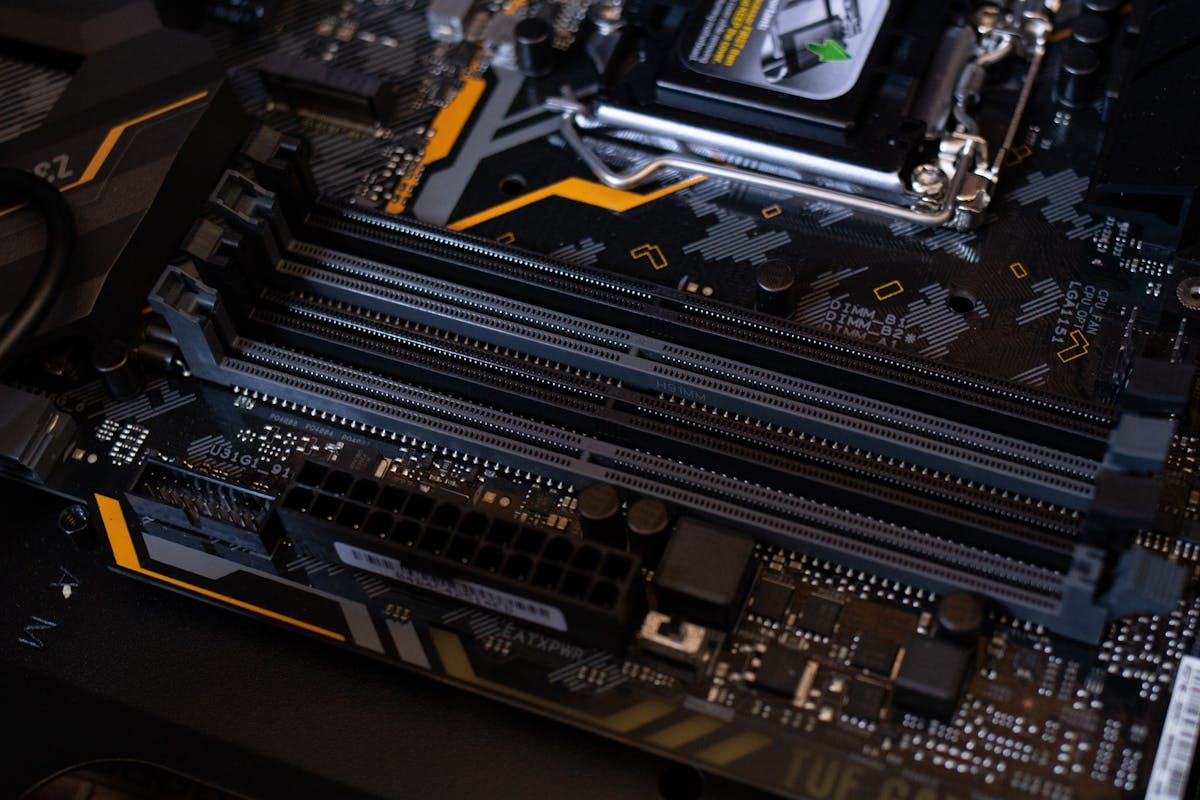

- RAM Configuration — ECC and non-ECC DDR5/DDR4 configuration. High-capacity builds (128GB–512GB) for large dataset preprocessing and model fine-tuning.

- Storage & Dataset Drive Setup — NVMe RAID for fast dataset I/O, large HDD arrays for dataset storage, and SSD caching configurations to maximise training throughput.

- Cooling & Power Configuration — Custom AIO or open-loop water cooling for sustained GPU and CPU boost clocks. High-wattage PSU selection and cable management for multi-GPU setups.

- OS & AI Stack Setup — Ubuntu or Windows 11 installation, CUDA toolkit, cuDNN, PyTorch, TensorFlow, Stable Diffusion WebUI (AUTOMATIC1111 / ComfyUI), and Ollama setup included on request.

- Remote Access & Network Setup — SSH, Tailscale, or VPN configuration so you can submit training jobs or access your AI rig remotely from anywhere.

🤖 Common AI Workloads We Build For

- ✅ LLM Training & Fine-Tuning — LoRA, QLoRA, full fine-tune of Llama, Mistral, Phi, Gemma models using Unsloth, Axolotl, or HuggingFace Transformers

- ✅ Local LLM Inference — Run Ollama, LM Studio, or llama.cpp with 24GB–80GB+ VRAM configurations for full-precision or quantised models

- ✅ Stable Diffusion & Image Generation — AUTOMATIC1111, ComfyUI, Forge — fast generation with high VRAM GPUs and NVMe scratch storage

- ✅ AI Video Generation — Wan2.1, CogVideoX, Mochi, HunyuanVideo — high VRAM multi-GPU builds for video diffusion workloads

- ✅ Computer Vision & Object Detection — YOLO, SAM2, DINO — fast inference and training on GPU-accelerated PyTorch stacks

- ✅ Data Science & ML Research — Jupyter, pandas, scikit-learn, XGBoost workloads requiring high-RAM CPU builds with fast NVMe storage

- ✅ Multi-Agent AI Systems — High-CPU-core builds for running multiple local AI agents or serving multiple users on a local inference server

GPU Recommendations by Use Case

| GPU | VRAM | Best For |

|---|---|---|

| NVIDIA RTX 4070 Ti / 4080 | 16GB | Stable Diffusion, local LLM inference (7B–13B), fine-tuning small models |

| NVIDIA RTX 4090 | 24GB | Stable Diffusion XL, LLM inference (up to 30B), LoRA fine-tuning |

| NVIDIA RTX 5090 | 32GB | AI video generation, large model inference, multi-task AI workloads |

| 2× RTX 4090 (NVLink) | 48GB | 70B model inference, full fine-tune of mid-size LLMs |

| NVIDIA RTX PRO 6000 / A6000 | 48GB | Professional AI research, large batch training, ECC workloads |

| AMD RX 7900 XTX | 24GB | ROCm/PyTorch workloads, budget-friendly large VRAM option |

*Component availability subject to market supply. We advise on best available options at time of build.

Sample AI PC Build Configurations

| Build Tier | CPU | GPU | RAM | Storage | Est. Price (SGD) |

|---|---|---|---|---|---|

| Entry AI | Ryzen 9 7900X | RTX 4070 Ti (16GB) | 64GB DDR5 | 2TB NVMe + 4TB HDD | From $3,500 |

| Mid AI | Ryzen 9 7950X | RTX 4090 (24GB) | 128GB DDR5 | 4TB NVMe + 8TB HDD | From $6,500 |

| Pro AI | Threadripper PRO | 2× RTX 4090 | 256GB ECC DDR5 | 8TB NVMe RAID + 16TB HDD | From $14,000 |

| Enterprise AI | Intel Xeon W | RTX PRO 6000 / A6000 | 512GB ECC DDR5 | Custom NVMe RAID | On Request |

*Prices are indicative and subject to component costs at time of build. Free consultation and quotation provided.

Our Build Process

- Free Consultation — We discuss your AI workloads, target models, budget, and any future upgrade plans to spec the right build.

- Component Selection & Quotation — We provide a detailed parts list with pricing, alternatives, and justification for each component choice.

- Sourcing & Procurement — We source components from trusted suppliers. You can supply your own parts or have us procure everything.

- Assembly & Cable Management — Professional assembly with full cable management, thermal paste application, and system seating checks.

- BIOS Configuration & Stress Testing — BIOS tuning for memory XMP/EXPO, PCIe Gen 4/5 confirmation, power limits, and full stress test (Prime95, OCCT, 3DMark) before OS install.

- OS & AI Stack Installation — Ubuntu or Windows 11, CUDA toolkit, drivers, and any AI frameworks or tools you need pre-installed and verified working.

- Handover & Documentation — Full walkthrough of your new system, build documentation, and configuration notes provided on handover.

Why Choose BreakFixNow for Your AI PC Build?

- ✅ AI Workload Expertise — We understand VRAM requirements, PCIe bandwidth, NVLink, and the software stack — not just generic PC assembly.

- ✅ Unbiased Component Advice — We recommend what’s right for your workload, not what has the highest margin. NVIDIA and AMD options both considered.

- ✅ Full-Stack Setup — OS, CUDA, drivers, and AI frameworks all installed and tested before handover.

- ✅ Post-Build Support — We’re available after handover to help with software issues, driver updates, or model setup questions.

- ✅ 90-Day Warranty — All hardware work and installations covered by a 90-day warranty.

- ✅ Future-Proof Builds — We design with upgradeability in mind — extra PCIe slots, sufficient PSU headroom, and compatible platform choices.

📋 Visit Our 4 Locations

Bugis Village

3 New Bugis Street, CCP #106

Singapore 188867

⏰ Hours

11am – 9pm Daily

BedokMall

311 New Upper Changi Rd, #B1-14

Singapore 467360

⏰ Hours

11am – 9pm Daily

WestGate

03-K2 (Outside Singtel)

3 Gateway Road, Singapore 608532

⏰ Hours

12pm – 9pm Daily

Ang Mo Kio Central

#01-2533 (Opposite MaxiCash)

703 Ang Mo Kio Ave 8, Singapore 560703

⏰ Hours

11am – 9pm Daily

Other Services:

Laptop Repair | NAS & Workstation Repair | Motherboard Repair | SSD Upgrade | Screen Repair

AI PC Build Singapore — FAQ | BreakFixNow

How much does it cost to build an AI PC in Singapore?

Entry-level AI builds suitable for Stable Diffusion and local LLM inference start from around SGD $3,500. Mid-range builds with an RTX 4090 and 128GB RAM start from around $6,500. Professional multi-GPU builds for LLM training start from $14,000. We provide a detailed free quotation based on your specific requirements before any commitment.

Which GPU is best for running Stable Diffusion or AI image generation?

For Stable Diffusion (AUTOMATIC1111, ComfyUI, Forge), we recommend a minimum of 16GB VRAM — the RTX 4070 Ti or RTX 4080 are excellent mid-range choices. The RTX 4090 (24GB) is the best consumer option for SDXL, ControlNet stacks, and video generation. For serious video AI (Wan2.1, HunyuanVideo), a dual RTX 4090 or RTX 5090 setup is ideal.

Can I run large language models (LLMs) locally on a custom PC?

Yes. With an RTX 4090 (24GB VRAM) you can run models up to 30B parameters in quantised form (Q4/Q5) using Ollama or llama.cpp. For 70B models at good speed, a dual RTX 4090 (48GB combined) or an RTX PRO 6000 (48GB) is recommended. We’ll advise you on the right VRAM configuration for your target models.

Do you install CUDA, PyTorch, and the AI software stack?

Yes — we can pre-install the full AI stack on your build: Ubuntu or Windows 11, NVIDIA drivers, CUDA toolkit, cuDNN, PyTorch, TensorFlow, Stable Diffusion WebUI, ComfyUI, Ollama, and any other frameworks you need. Everything is tested and verified working before handover.

Should I use AMD or NVIDIA GPU for AI work?

NVIDIA is the stronger choice for most AI workloads due to mature CUDA support across PyTorch, TensorFlow, and most AI libraries. AMD (ROCm) support has improved significantly and is a viable budget-friendly option for PyTorch workloads on Linux. We’ll advise based on your specific software requirements and budget.

Can you build a multi-GPU AI workstation?

Yes. We build dual and quad GPU configurations using PCIe slots. For NVIDIA, NVLink is supported on select RTX 4090 and professional-grade cards for unified VRAM. For non-NVLink multi-GPU setups (used in tensor parallelism with frameworks like DeepSpeed), we ensure full PCIe Gen 4/5 bandwidth and appropriate PSU capacity.

How long does it take to build an AI PC?

Most builds are completed within 3–7 business days from parts confirmation — this includes assembly, testing, OS installation, and AI stack setup. Complex multi-GPU or custom water-cooled builds may take slightly longer. We’ll give you a timeline at the consultation stage.

Can I supply my own components?

Yes. If you already have some components (GPU, case, drives), we can work with what you have and source or advise on the remaining parts. We’ll review compatibility before proceeding and flag any potential issues.

Do you offer ongoing support after the build?

Yes. We offer post-build support for software issues, driver updates, model setup, and troubleshooting. Hardware repairs after handover are covered by our 90-day warranty. We’re also available to advise on software-side questions related to your AI setup.

Table of Contents